Multi-agent systems are often described as if parallelism alone were the main advantage. Recent engineering guidance points in a more practical direction. The useful part is not simply that several agents can run at once, but that work can be decomposed into bounded responsibilities with clear handoffs. That framing matters because it makes the system easier to steer, easier to review, and less dependent on vague coordination.

Summary

Anthropic's engineering write-up on its multi-agent research system is unusually direct on this point: subagents perform better when the lead agent gives them an objective, an output format, guidance on tools and sources, and clear task boundaries. Without that, agents duplicate work, leave gaps, or misread the assignment. OpenAI's documentation on structured outputs reaches a compatible conclusion from the API side: predictable schemas make downstream interoperability safer because each component can expect a defined shape rather than free-form output.

Together, those sources support a grounded claim: parallel execution needs structure. Multi-agent workflows become more useful when each agent owns a bounded job and returns something another component can consume reliably.

What happened

In public documentation and engineering notes over the last year, the conversation around agents has shifted from generic autonomy to operational design. The more credible material now focuses on orchestration patterns, tool selection, evaluation, and output discipline rather than on the idea that agents become effective merely by being allowed to act independently.

That matters because multi-agent execution introduces coordination cost. Every new agent adds another context, another task description, another result, and another chance for ambiguity. If responsibilities are vague, the system spends capacity coordinating confusion instead of solving the task.

Why it matters

Teams evaluating agent systems usually care about practical questions: can the work be reviewed, can outputs be trusted enough to feed the next step, and can errors be traced back to the right stage? Clear task boundaries improve all three.

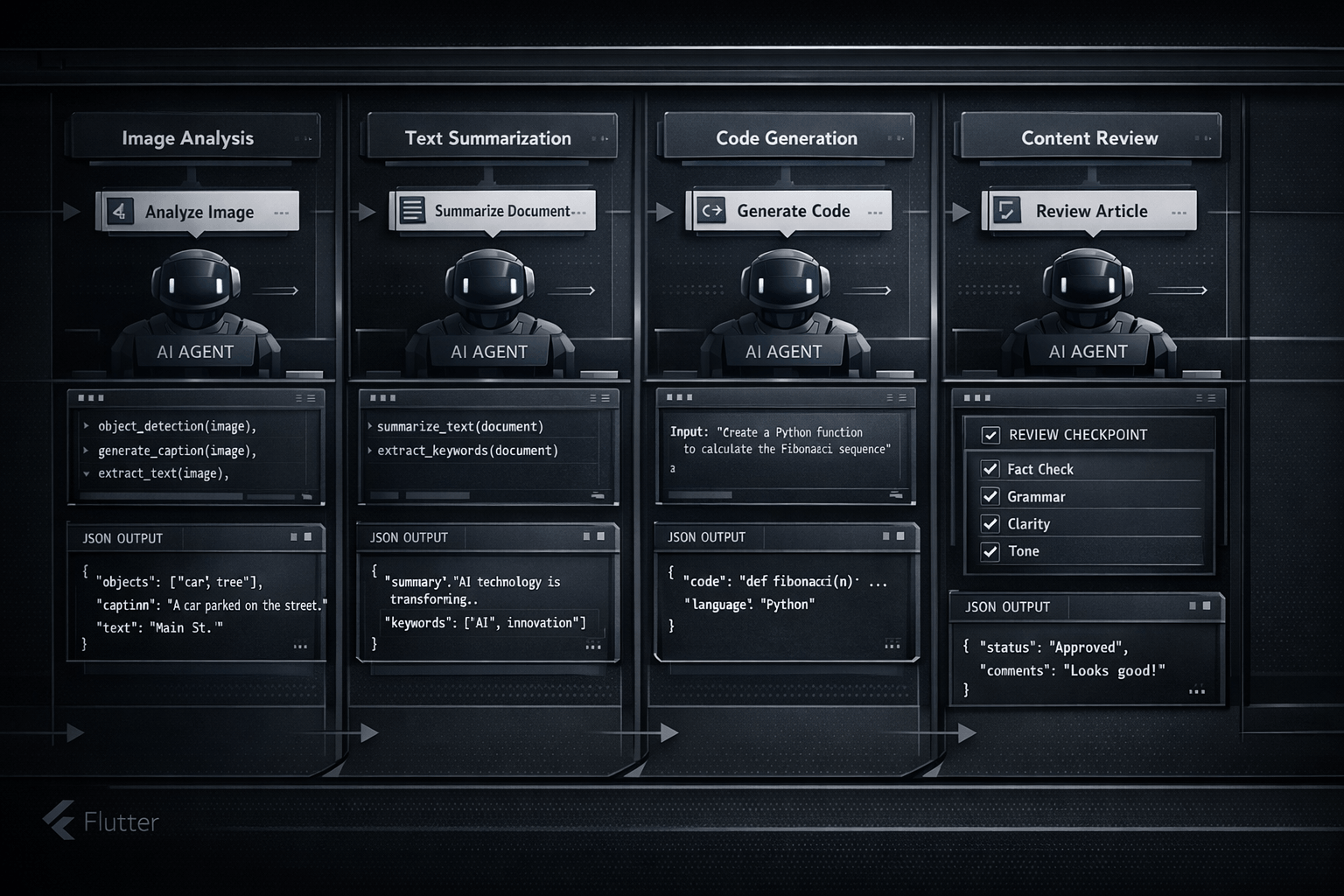

When one agent researches, another summarizes, another validates structure, and a lead process decides whether more work is needed, the system becomes easier to reason about. If the output is wrong, there is a narrower place to inspect. If the output is right, it is easier to reuse. This is more useful than a loosely coordinated swarm because the workflow stays legible.

Multi-agent execution works best when coordination is treated as a design problem, not as an emergent property that will resolve itself.

Why task boundaries matter

Task boundaries do three jobs at once. First, they reduce overlap by telling each agent what it should and should not do. Second, they make evaluation easier because success can be tied to a narrower objective. Third, they improve handoffs because the receiving step knows what kind of output to expect.

Anthropic describes this concretely: each subagent benefits from a specific objective, output expectations, tool guidance, and a bounded scope. OpenAI's structured-output guidance provides the matching implementation discipline: if outputs conform to a schema, later stages do not need to infer missing keys or reinterpret ad hoc formatting.

In other words, agents work better with well-defined inputs and predictable outputs because ambiguity no longer leaks across the whole system. The boundary is not only about responsibility; it is also about the contract for exchanging work.

Parallel execution examples

A useful pattern is to split independent directions of work rather than fragments of the same uncertain task. One agent can inspect documentation, another can produce a candidate implementation, and a third can normalize the result into a structured payload for review. That kind of parallelism compounds value because outputs can be compared, validated, or merged without each agent guessing what the others meant.

The same logic applies in a Flutter context. A Nic Hyper Flow workflow might delegate one bounded agent to inspect repository files, another to review Firestore or project context, and another to prepare content or assets. This is not useful because "more agents" is automatically better. It is useful because each step can own a real task and return a predictable artifact.

Once outputs are explicit, parallel work becomes reviewable. A human or lead agent can compare JSON metadata, HTML content, asset references, or validation notes without reconstructing the full reasoning path from raw conversation alone.

Connection to Nic Hyper Flow

Nic Hyper Flow is a practical context for this framing because it treats agents as working components inside a real tool environment. For Flutter-oriented teams, that matters: the useful question is not whether an agent can talk at length, but whether it can own a task, use the right tools, and leave behind an inspectable result.

That is why the multi-agent capability is more credible when tied to explicit task ownership. One agent can work on implementation, another on validation, another on research or content preparation, while the orchestrating step retains control over boundaries and acceptance criteria. The workflow is stronger because its outputs are easier to audit and because failures are easier to localize.

In that sense, Nic Hyper Flow's multi-agent execution is most useful when it stays disciplined: bounded tasks, structured handoffs, and outputs that can be reviewed in sequence rather than interpreted after the fact.

Conclusion

Multi-agent workflows are becoming more useful, but not for the vague reason that "many agents can do more." The stronger reason is that parallel work becomes dependable when each agent has a clearly bounded assignment, receives well-defined inputs, and returns a predictable output.

That is the more grounded way to think about multi-agent systems in 2026. Parallel execution needs structure. Clear task boundaries turn distributed reasoning from a loose idea into something technical teams can actually review, debug, and use.

Sources

The article draws on the following pages read during preparation:

- Anthropic — How we built our multi-agent research system

- OpenAI API docs — Structured model outputs

- Flutter documentation — Testing & debugging

Frequently Asked Questions

How does Nic Hyper Flow improve mobile debugging?

It allows developers to send screenshots directly from a phone to an AI agent that has full context of the repository, enabling faster diagnosis and code fixes.

Does it support Flutter hot reload?

Yes, Nic Hyper Flow is designed to work with Flutter's hot reload, allowing you to apply agent-generated fixes and see the results on your device instantly.